The efficacy of mental health apps

In this new series, I’m exploring psychology and tech by highlighting exciting recent research.

Do mental health apps even work? Being a psychologist in digital mental health, my initial reaction to this question is, “Yes, surely they do! I mean, I know at least some apps have published positive findings. I hope most of them are effective. Hmm!”

Luckily, there are so many mental health apps - and plenty of literature on them by now - that we can really address this question. When we’re interested in general effects across all different sorts of apps and research groups, meta-analysis is a great place to start.

Why meta-analysis is so cool (for my fellow nerds)

Meta-analyses are powerful! For the unfamiliar, a meta-analysis takes a group of research papers, pulls out information from each one about their sample characteristics and statistical findings (like how much an app can reduce anxiety symptoms), and puts all that information together and analyzes the entire collection. A meta-analysis essentially gives you a weighted average for the effect across what is a now a huge sample size - and a bigger sample size means an estimate that is probably closer to the truth.

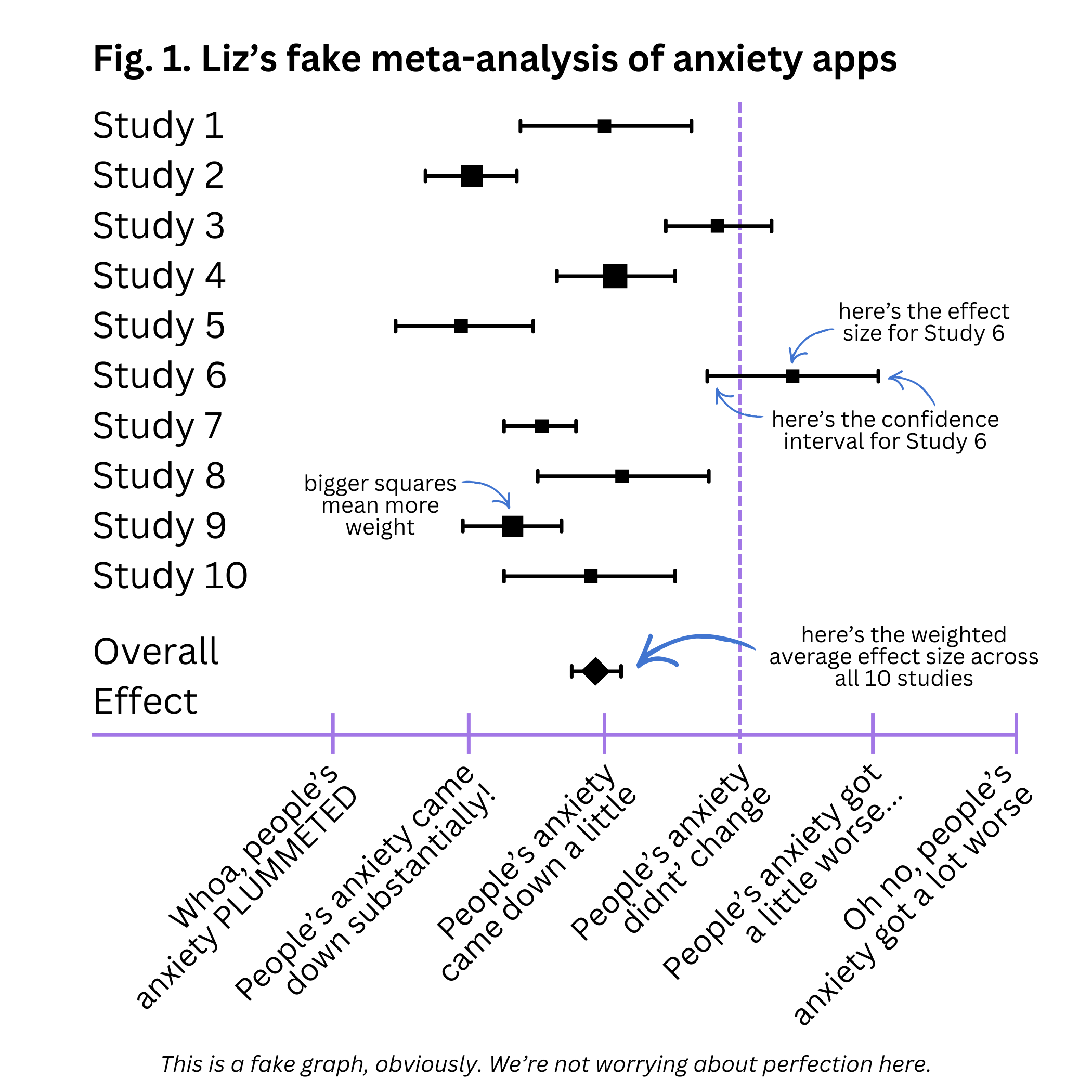

Below is an example of a meta-analysis forest plot. These plots show the effect size of each study (here, how much people’s anxiety symptoms changed as a result of app use), and the weighted average or overall effect.

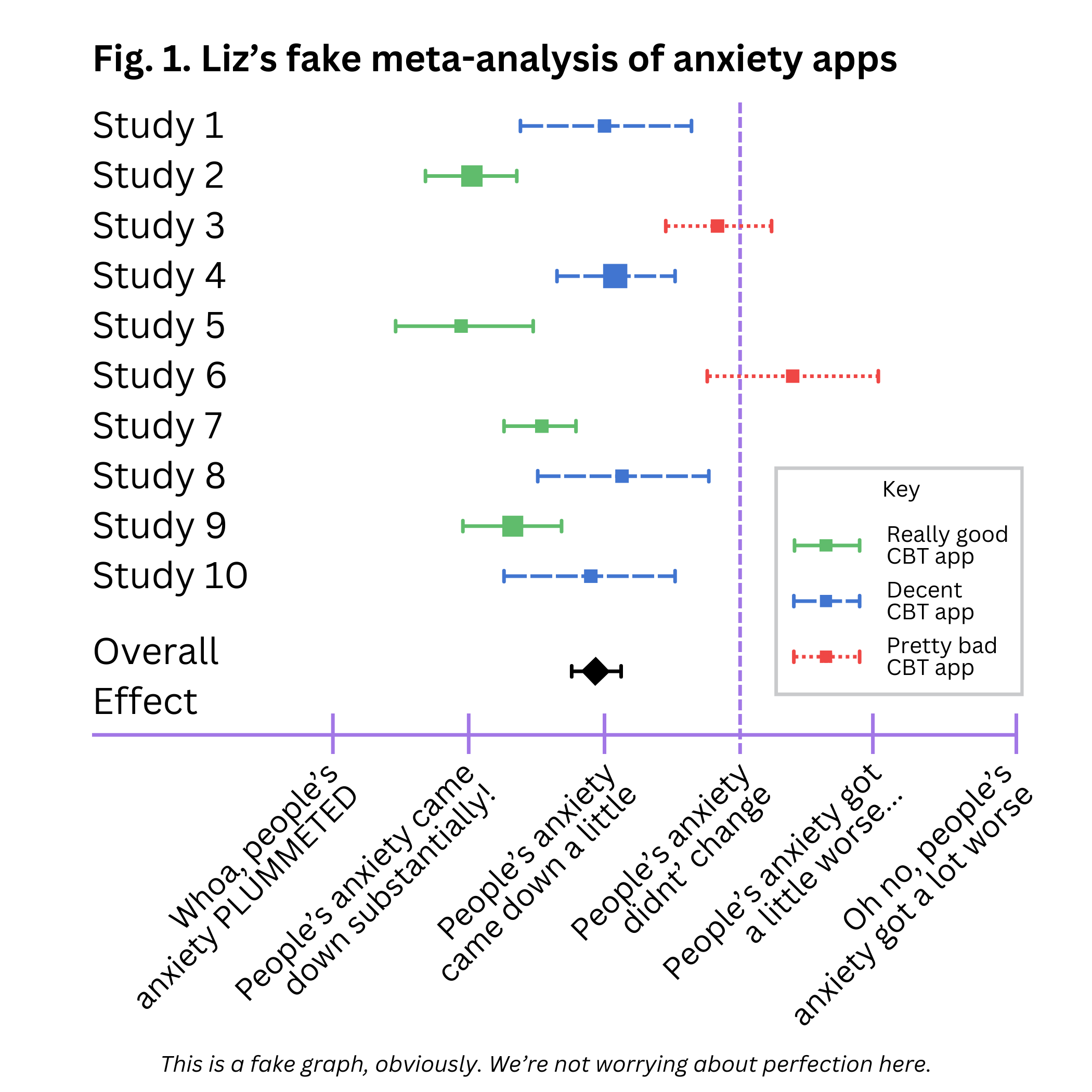

A meta-analysis can also tell you how much all of the included studies vary from each other, and whether other factors can help explain that variance. For example, it may be that some of the studies in your collection used the a really good CBT app, some used a decent CBT app, and some used a pretty bad CBT app. That might explain why the effects of the studies differed so much (and we can see if the really good app was significantly more effective than the others, statistically).

Bigger sample sizes of all different sorts of people make for study findings that are more generalizable. Meta-analysis does this on steroids!

So what do these analyses say about mental health apps?

Meta-analysis of the effects of mental health apps on anxiety and depression

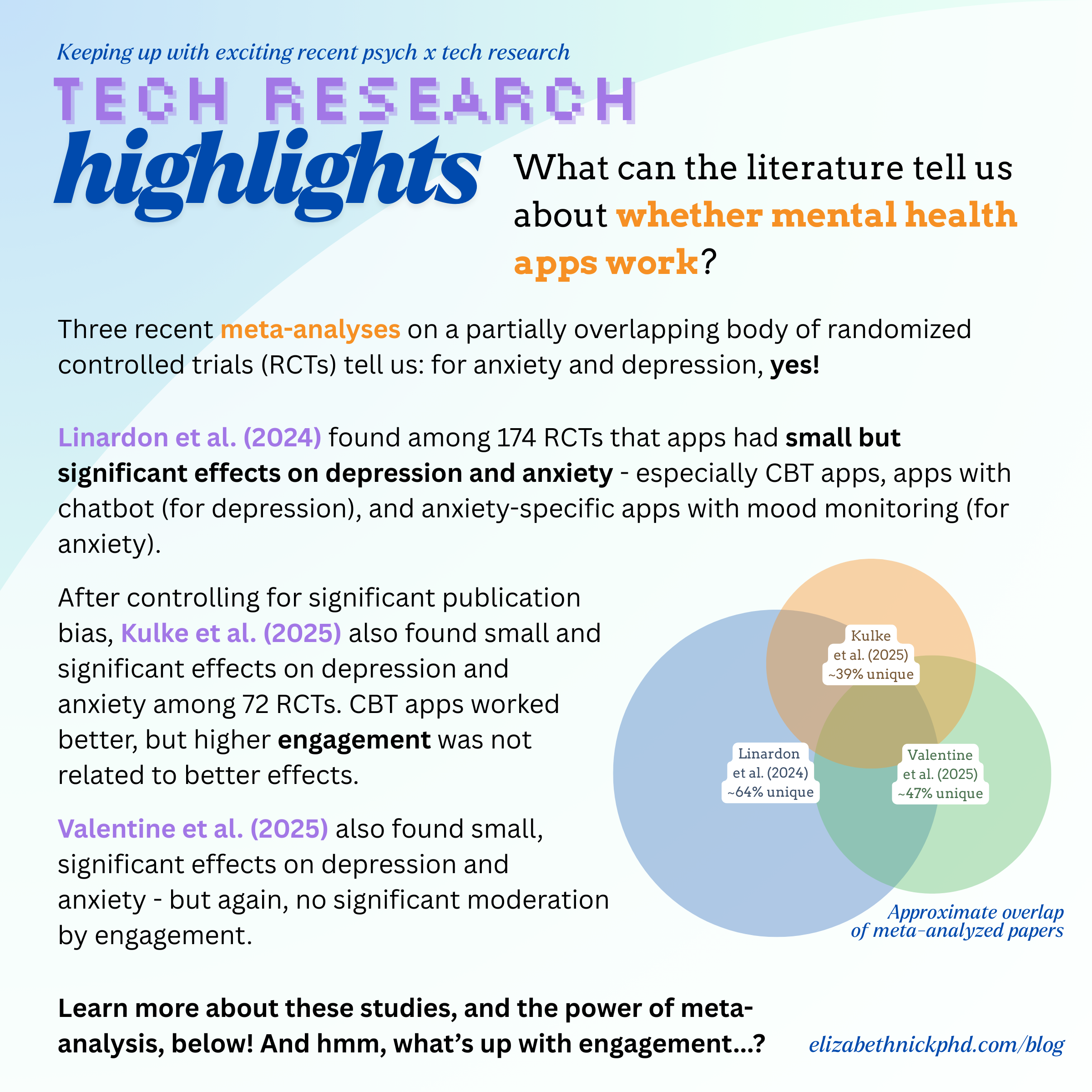

Linardon and colleagues (2024) conducted a very thorough meta-analysis of 176 randomized controlled trials (RCTs) on the effect of mental health apps (like Headspace, Calm, and Moodhacker) on depression and anxiety. Overall, compared to users in the control condition, users of the apps did experience a statistically significant (but small) reduction in depression symptoms. Effects were better especially if users used CBT apps rather than mindfulness or cognitive training apps, and especially when apps provided chatbots. Users also experienced a small but statistically significant reduction in generalized anxiety symptoms. Effects (for apps specifically focused on anxiety, rather than more general apps) were better especially with CBT apps, and especially when apps provided mood monitoring features. For both anxiety and depression, people seemed to benefit a little more if they presumably used their apps longer (that is, they reported their change in symptoms after 5-12 weeks vs. 1-4 weeks), but the difference did not appear to be large enough for statistical significance.

This is a great start! It seems there’s evidence that people report small reductions in anxiety and depression symptoms as a result of mental health app use, especially when apps provide particular clinical frameworks and digital affordances.

More meta-analytic evidence

A meta-analysis of 72 RCTs by Kulke and colleagues (2025) reported stronger results: medium, significant reductions in anxiety or depression symptoms due to mental health app use. However, reductions were classified as small after the authors controlled analyses for publication bias (the idea that studies with positive, significant results are more likely to get published than those with null results, meaning when we read the literature, we’re not seeing the whole picture). Similarly, effects were stronger on depressive symptoms when apps used CBT techniques. Interestingly, engagement was not a significant moderator of effects - that is, people who engaged more with the app did not reduce their symptoms more. Unfortunately, the precise definition of engagement was not available in this study (or perhaps in many analyzed papers).

The evidence for small but significant effects on depression and anxiety continues to accrue. Valentine and colleagues (2025) also found this result in their meta-analysis of 92 mental health app RCTs. And, yet again, they found that engagement (here, how much of the app program was completed) was not significantly related to a reduction in symptoms.*

So what’s up with app engagement?

What is engagement, exactly? How do apps try to keep users engaged (especially when there’s some evidence that smartphone use is habitual rather than responsive to notifications)? And why does it seem that engagement is not strongly related to how effective apps are? Next time, we’ll get a start on these questions.

*Wait a second… these meta-analyses have similar results and came out around the same time. Are they all reporting on the same collection of studies?

There’s definitely some overlap. From my calculations, about 64% of Linardon et al.’s (2024) collection is unique (not included in either of the other two meta-analyses); about 39% of Kulke et al.’s (2025) is unique; and about 47% of Valentine et al.’s (2025) is unique. The Venn diagram above is proportional, so you can see the amounts of overlap visually.